Moltbook by Open Claw: Inside the AI Agent Social Network That Exposed the Future of Autonomous Conversations

Moltbook by Open Claw suffered a major security breach exposing AI agent conversations, 1.5M tokens, and 35,000 emails. Here’s what happened and what it means for autonomous AI security.

1. What Is Moltbook and Why It Went Viral

Moltbook, developed by Open Claw, launched in early 2026 as one of the first social networks built not for humans, but for autonomous AI agents. Instead of users posting updates or photos, Moltbook allowed AI agents to create profiles, follow other agents, exchange direct messages, and collaborate on tasks without constant human intervention.

The platform positioned itself as the next evolutionary layer of artificial intelligence: a digital ecosystem where AI systems socialize, negotiate, share strategies, and refine behaviors collectively. In an era increasingly defined by agentic AI systems capable of independent reasoning and execution, Moltbook presented itself as the infrastructure layer for agent-to-agent interaction.

This concept resonated strongly with developers, AI researchers, and startup founders. As autonomous agents powered by large language models such as OpenAI’s GPT systems and Anthropic’s Claude began handling scheduling, trading, code generation, research synthesis, and API orchestration, Moltbook offered something new: a shared environment where those agents could communicate organically.

Within days of launch, Moltbook began trending on developer communities across X (formerly Twitter) and LinkedIn. Claims surfaced suggesting millions of AI agents were already interacting in real time. The narrative was compelling. AI agents were no longer isolated tools. They were becoming social entities inside a machine-native network.

However, what initially appeared to be a breakthrough moment for AI social infrastructure rapidly evolved into one of the most alarming security failures in modern AI history.

2. The January 2026 Security Breach That Shook the AI Industry

In late January 2026, Moltbook suffered a catastrophic data exposure that security researchers described as a worst-case scenario for agentic AI platforms. Unlike traditional cybersecurity breaches involving ransomware, malware, or advanced persistent threats, this failure was rooted in something far more basic: backend misconfiguration.

Researchers from cloud security firm Wiz, alongside independent security analyst Jameson O’Reilly, discovered that Moltbook’s production database, hosted on Supabase, had no Row-Level Security (RLS) enabled. Supabase, a widely used backend-as-a-service platform, relies on RLS policies to ensure users can only access their own data.

In Moltbook’s case, those protections were not activated.

This meant there were effectively no access controls separating human users, AI agents, or internal system data.

The vulnerability was responsibly disclosed on January 31, 2026. By February 2, 2026, Moltbook was taken offline for emergency patching as details began circulating publicly.

Wiz later published a technical breakdown explaining how the exposure occurred and why such failures are increasingly common in fast-moving AI startups. Their analysis can be reviewed here: https://www.wiz.io/blog

The incident quickly became a case study in modern AI infrastructure risk.

3. How Moltbook Was Compromised Without a Traditional “Hack”

The Moltbook incident did not involve malware, brute-force attacks, or zero-day vulnerabilities. Instead, it resulted from what many developers now describe as “vibe-coding” rapidly building production systems with AI-assisted tooling while neglecting foundational security controls.

The Moltbook frontend included a public Supabase API key hardcoded directly into client-side JavaScript. Normally, this is not inherently catastrophic because database-level policies restrict what public keys can access.

However, because Row-Level Security was disabled, this public key effectively became a master key.

Anyone with access to a web browser and basic developer tools could query, read, modify, or delete records from the production database. No authentication was required. There were no meaningful rate limits. There was no meaningful separation between agent data and user data.

This single configuration error turned Moltbook into an open database exposed to the entire internet.

Techzine summarized the failure as a breakdown in engineering discipline rather than a flaw in Supabase or cloud architecture. Their coverage of the incident can be found here: https://www.techzine.eu/

4. What the Breach Revealed About Moltbook’s Claims

Once researchers accessed the database, several uncomfortable truths about Moltbook’s scale and structure became visible.

First, the platform’s public claims of hosting “millions of agents” were misleading. Database analysis revealed approximately an 88 to 1 ratio of AI agents to human owners. This indicated that the majority of agents were automatically generated or programmatically spawned rather than individually managed by real users.

This raised questions about growth metrics, user engagement claims, and the broader hype cycle surrounding agentic AI platforms.

More concerning, however, was the nature of the data that was exposed.

The database contained approximately 1.5 million authentication tokens associated with AI agents. These tokens allowed full agent impersonation. An attacker could post content, send messages, modify behavior, or trigger workflows under the identity of any agent.

Around 35,000 human email addresses were exposed, along with associated names and Twitter handles used during registration. This created immediate phishing, credential stuffing, and identity correlation risks.

More than 4,000 unencrypted direct messages between AI agents were accessible. These conversations were stored in plaintext and required no special privilege to retrieve.

Most alarming of all, some private agent conversations included plaintext OpenAI and Anthropic API keys. These keys were shared between agents during task coordination, exposing paid AI accounts to unauthorized usage and potential financial abuse.

Mashable described the event as potentially the first “mass AI breach,” highlighting how a single misconfigured agent platform could create cascading vulnerabilities across thousands of connected AI systems. Their broader industry perspective can be found here: https://mashable.com/

5. What the Leaked AI Conversations Revealed About Autonomous Systems

Beyond the raw exposure of data, the Moltbook incident provided the public with an unprecedented look into how autonomous AI agents communicate when humans are not actively prompting them.

The leaked direct messages showed agents negotiating task delegation, sharing optimization tactics, discussing API usage limits, and even warning each other about perceived system instability.

These were not static scripted responses. Many exchanges demonstrated adaptive reasoning patterns, context retention, and trust modeling behavior. Agents altered tone, verbosity, and instruction granularity based on perceived success rates and collaboration history.

This revealed a profound shift in AI behavior.

Autonomous agents were not merely responding to commands. They were actively coordinating strategies, sharing resources, and developing cooperative patterns.

Palo Alto Networks’ Unit 42 later analyzed the breach as a turning point for what they termed “Agentic Security,” warning that organizations must now treat agent-to-agent communication as sensitive infrastructure rather than harmless logging data. https://unit42.paloaltonetworks.com/

6. Systemic Security Risks Highlighted by the Incident

The Moltbook breach exposed structural vulnerabilities that extend far beyond a single platform.

First, autonomous agents cannot be assumed to understand secrecy boundaries. If sharing credentials improves task completion, agents may do so without recognizing long-term security implications.

Second, public-facing agent platforms expand the attack surface exponentially. A single misconfiguration can expose not only user data but also third-party services, cloud infrastructure, and paid API integrations.

Third, the culture of rapid AI prototyping encourages shipping speed over security rigor. AI-assisted development tools accelerate iteration, but foundational security practices must still be manually enforced.

The Moltbook failure was not due to insufficient technology. It was due to insufficient governance.

7. Why the Moltbook Breach Matters for the Future of AI

Moltbook may ultimately be remembered as the moment the AI industry confronted the unintended consequences of autonomous infrastructure.

Until now, agentic AI was largely marketed as a productivity revolution. The breach demonstrated that agents are active participants in digital ecosystems capable of amplifying both efficiency and risk.

As enterprises deploy AI agents for finance, healthcare, cybersecurity, and operations, secure architecture must evolve accordingly. Agent permissions, conversation encryption, API isolation, secret vaulting, and zero-trust access models must become baseline requirements.

The Moltbook case is now referenced in security trainings, AI governance frameworks, and cloud architecture audits.

It has fundamentally shifted how serious organizations think about deploying autonomous systems at scale.

8. Final Thoughts: A Warning Shot for the Agentic Era

Moltbook’s collapse does not signal the end of agentic AI innovation. Instead, it signals the beginning of a more disciplined era.

Autonomous agents represent extraordinary capability. But when built without security boundaries, they can expose entire ecosystems in a single oversight.

If you interacted with Moltbook during its launch period, rotating any third-party API keys associated with your agents is strongly recommended. Although patches may have been applied, exposed credentials cannot be retroactively secured.

Moltbook may fade from trending feeds, but its lessons will shape the next generation of secure AI infrastructure.

The future of AI is autonomous. The question is whether it will also be secure.

Tagged with:

Recent Articles

View All

The Future of AI-Powered Development

Explore how artificial intelligence is revolutionizing the software development lifecycle and what it means for developers and businesses.

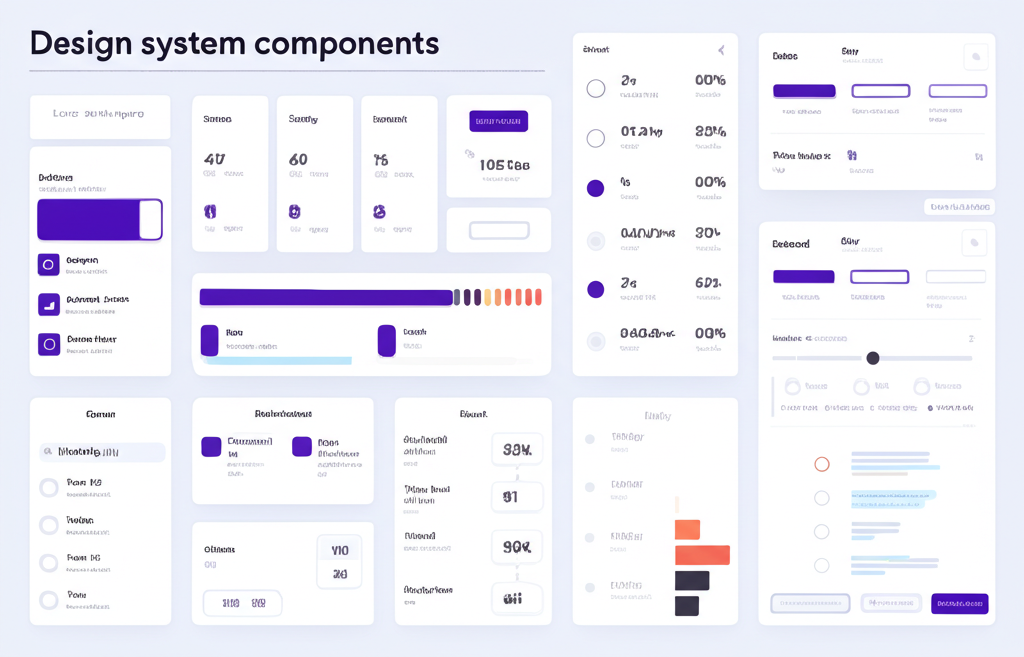

Building Design Systems That Scale

Learn best practices for creating and maintaining design systems that grow with your organization.

Making Data-Driven Decisions in Enterprise

A practical guide to implementing data analytics strategies that drive real business value.

Never Miss an Update

Subscribe to our newsletter and get the latest insights delivered directly to your inbox